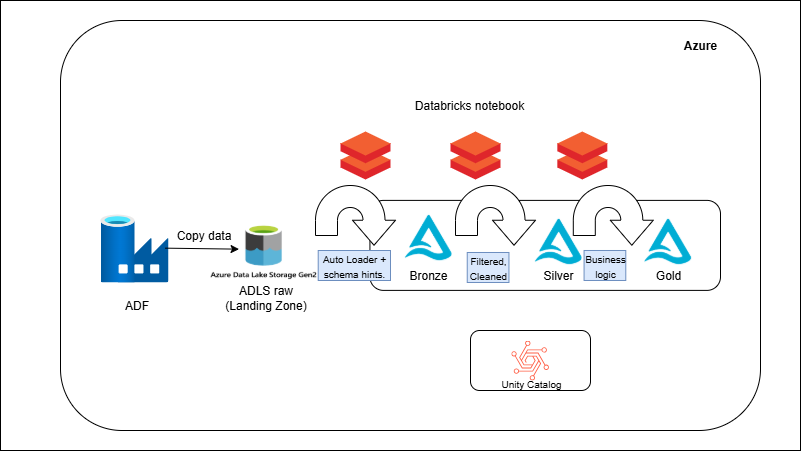

Files arrive in ADLS raw and are ingested by ADF into Bronze as Delta. Databricks notebooks read Bronze, apply cleaning and conformance to produce Silver (row-level quality, proper types, SCD-ready joins). A second notebook aggregates/enriches into Gold for BI/ML. Tables are partitioned (e.g., by date) and Z-Ordered on common filters; periodic `OPTIMIZE`/`VACUUM` keeps storage and query performance healthy. ADF triggers orchestrate Bronze→Silver→Gold with clear run logs.