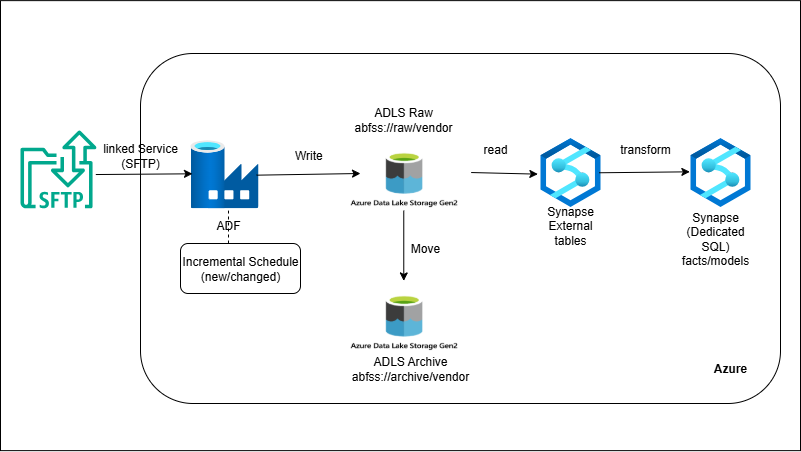

External SFTP → ADF → ADLS → Synapse

Overview

ADF connects to the vendor’s SFTP using a linked service (key-based auth), lists new CSV/JSON files, and copies them into ADLS raw with a strict folder scheme (e.g., `vendorA/ingest/dt=YYYY-MM-DD/`). A validation step (mapping/Data Flow) enforces schema and row counts; failures go to a reject path. Valid files load into Synapse—either via external tables or COPY INTO—into staging, followed by a MERGE into modeled fact/dim tables. Files are archived for audit, and ADF retries/alerts handle transient issues. A trigger runs the pipeline on cadence.

Outcome

- Secure SFTP ingestion into ADLS with ADF linked services.

- Trustworthy loads via schema/metadata checks and retries/alerts.

- Analytics-ready Synapse tables with incremental merges.

What you’ll build

- ADF pipeline with SFTP Linked Service + datasets; key-based auth.

- Copy activity: SFTP → ADLS (raw) with date/vendor foldering and optional gzip.

- Validation step: filename pattern, row counts, schema (mapping or Data Flow).

- Synapse load: external table/COPY INTO to staging; MERGE to facts/dims.

- Archival in ADLS (`/archive/`) and reject path for bad files.

- Retries + alerts (Activity retry, pipeline failure email/Webhook).

- (Optional) ADF triggers for schedule; dependency chain raw → stage → model.