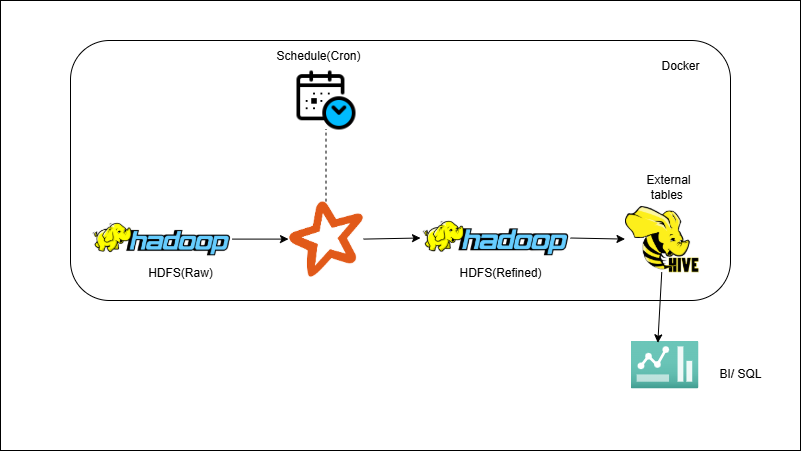

Files on HDFS → PySpark ETL → Parquet/ORC → Hive (Batch ETL)

Overview

You’ll read raw CSV/JSON from HDFS into Spark DataFrames, apply core ETL logic (schema checks, dedupe, enrich with lookups), and write optimized Parquet/ORC datasets partitioned for performance. Then you’ll register external Hive tables on top of the refined paths for analytics. The job is scheduled via cron or Airflow to keep the refined layer fresh.

Outcome

- Turn messy raw files into a refined, query-ready Hive layer.

- Gain faster, cheaper queries via Parquet/ORC + partitions/bucketing.

- Run the ETL hands-off with cron/Airflow.

What you’ll build

- PySpark ETL job/notebook (read → transform → write).

- Validation/dedupe + lookup-join examples.

- Partition/bucketing config for Parquet/ORC outputs.

- Hive external-table DDL on refined data.

- Airflow DAG / shell script to schedule.