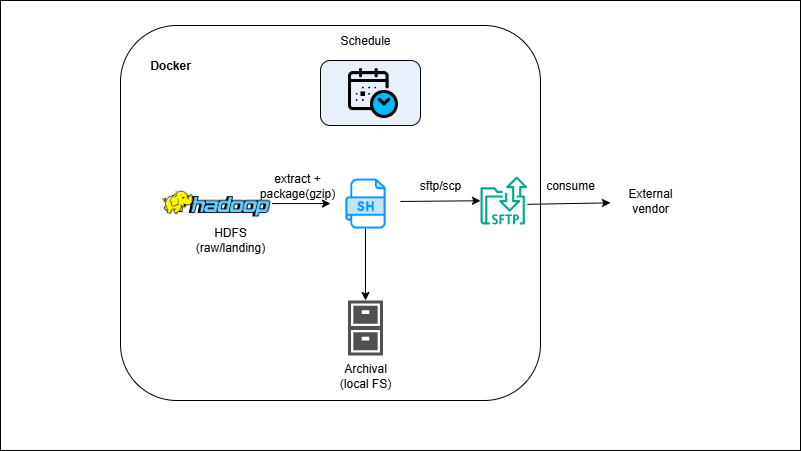

HDFS → Shell → SFTP Partner Delivery (Reverse ETL)

Overview

You’ll pull curated partitions from HDFS to a staging folder, generate CSV/Parquet exports, compress them, and create a checksum/manifest. The script then connects over SSH (key-based) to the partner’s SFTP, uploads with retries, verifies integrity, and moves files to an archive for audit. Date-stamped filenames and idempotent logic prevent duplicates; cron or Airflow schedules the run.

Outcome

- Reliable, auditable SFTP handoffs from the lake to external parties.

- Integrity & compliance via manifest/checksum + local archive.

- Hands-off runs with SSH keys and cron/Airflow.

What you’ll build

- Shell scripts to select HDFS partitions (`hdfs dfs -get/-copyToLocal`), format CSV/Parquet, and add headers/footers.

- Packaging + compression (`gzip/zip`) and checksum/manifest generator (MD5/SHA256).

- SFTP delivery using OpenSSH `sftp/scp` (or Python Paramiko) with retries and exit-code checks.

- Key management (ssh-keygen, `known_hosts`) and folders: `outbox/`, `sent/`, `archive/`, `error/`.

- Optional: Airflow/cron wrapper + success/failure notification.