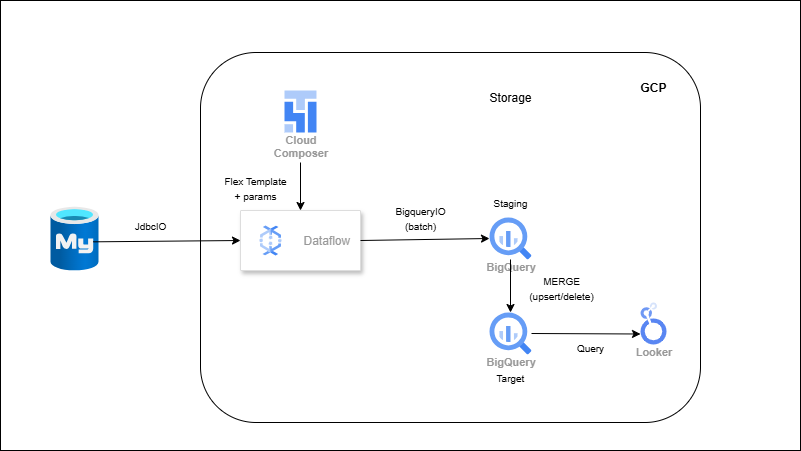

RDBMS → Dataflow (Flex Template) → BigQuery (Batch Ingestion)

Overview

You’ll package a Dataflow Flex Template that reads from MySQL via JdbcIO using partitioned queries, streams batches into BigQuery with BigQueryIO, and writes to partitioned/clustering-enabled tables. Parameters (JDBC URL, table, query, BQ table, batch size) make the job reusable. A validation step compares row counts, and Composer/Scheduler triggers recurring runs.

Outcome

- Scalable, fault-tolerant RDBMS → BigQuery ingestion.

- Partitioned tables + defined schema for faster, cheaper analytics.

- Automated runs via Cloud Composer or Cloud Scheduler.

What you’ll build

- A Dataflow Flex Template pipeline using JdbcIO → BigQueryIO.

- Source DB (MySQL) with sample transactional data.

- Parallel, partitioned reads (e.g., on numeric PK or date).

- BigQuery dataset/tables with partitioning & clustering.

- (Optional) Composer DAG or Scheduler job to trigger the template.