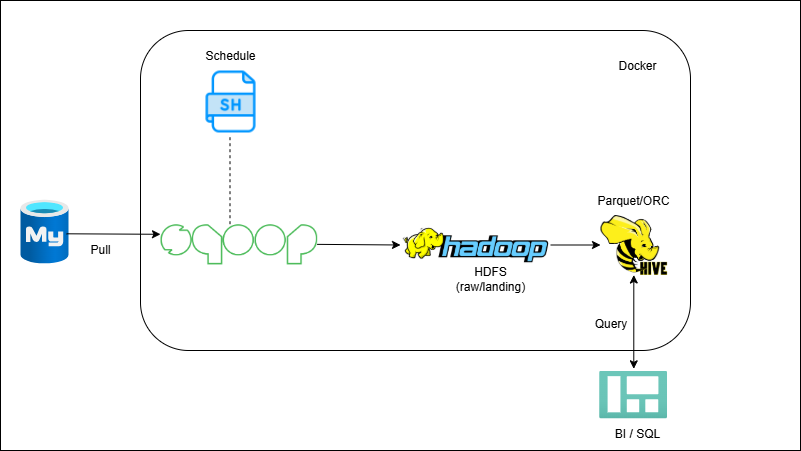

RDBMS → Sqoop → HDFS/Hive (Batch Ingestion)

Overview

You’ll configure Sqoop to pull from an OLTP database into HDFS; first a full load, then incremental loads using a watermark column. Data is stored in columnar formats and surfaced in Hive (external/managed, partitioned). Jobs run via shell/cron for repeatable batch ingestion.

Outcome

- Ingest full + incremental tables from MySQL into HDFS with Sqoop.

- Expose data via Hive tables (Parquet/ORC, partitioned).

- Automate daily runs with shell.

What you’ll build

- Docker/EMR lab with Hadoop + Hive.

- Sqoop jobs: full import + incremental by last-modified.

- Hive DDL for external/managed tables and partitions.

- Shell/Cron to orchestrate.