Vertex AI → Predict → BigQuery (ML Pipeline)

Overview

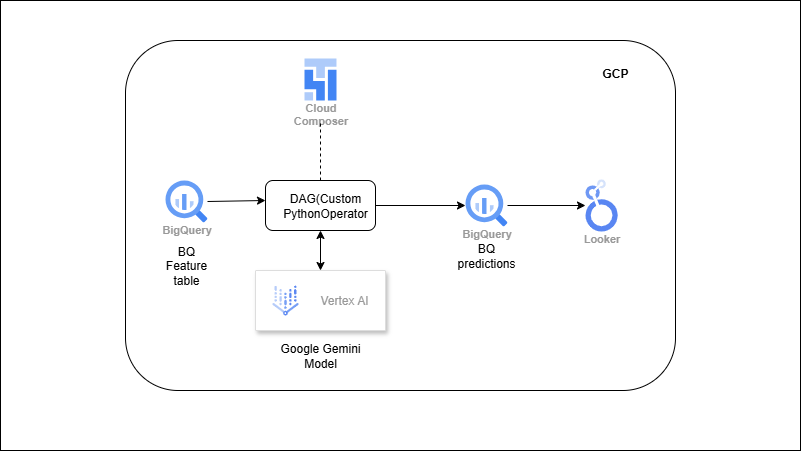

You’ll curate a BigQuery features table, then point a Vertex AI model (AutoML or custom) to those features for batch prediction. Predictions land in GCS as files, which are then loaded back into a BigQuery table (e.g., `predictions_churn_daily`) with keys, timestamps, and scores. A scheduler (Composer) runs the flow on cadence and can also kick off model retraining when enough new data accumulates—keeping predictions fresh for BI and downstream activation.

Outcome

- Production-style ML embedded in your data pipeline (batch, reliable).

- Seamless handoff: features from BigQuery → predictions back to BigQuery.

- Operational cadence with scheduled prediction + periodic retraining.

What you’ll build

- A features table in BigQuery (joins/aggregations over raw data).

- Vertex AI dataset/model (AutoML or pre-trained custom model).

- A batch prediction job (source from BigQuery or GCS) that writes outputs to GCS.

- A load step that ingests predictions (scores, labels) back into BigQuery.

- (Optional) Looker/Data Studio report on top of the predictions.

- (Optional) Composer DAG to orchestrate export → predict → load → report refresh.

- (Optional) Periodic retraining job that reuses the latest features.