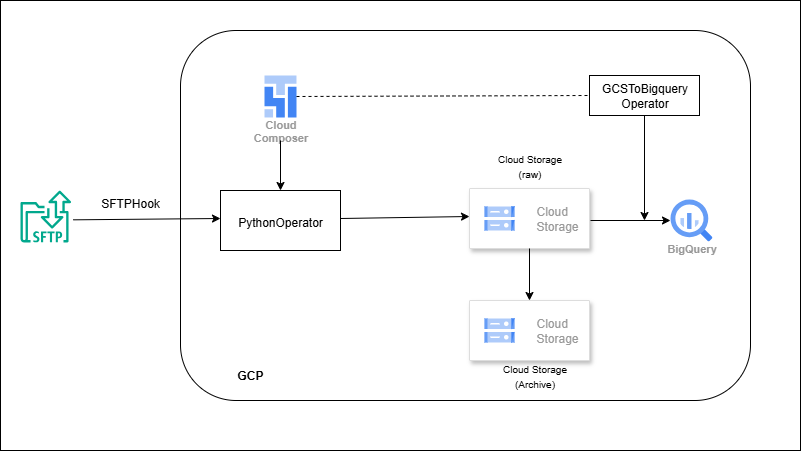

SFTP → Composer (Airflow) → GCS → BigQuery

Overview

Composer runs an Airflow DAG on a schedule (or trigger). Tasks connect to the vendor’s SFTP using key-based auth, pull new CSV/JSON, place them in a raw GCS bucket, validate (naming, row counts, optional schema), then load into BigQuery staging/partitioned tables. Success moves files to an archive; failures raise alerts. DAG parameters (SFTP path, GCS prefix, BQ table) make the same flow reusable across vendors.

Outcome

- Secure, parameterized vendor file ingestion managed by Composer.

- Reliable loads to BigQuery with schema/row checks and partitions.

- Operational hygiene: archiving + failure notifications.

What you’ll build

- An Airflow DAG that:

-

- Connects to SFTP (key-based), fetches new files, and lands them in GCS.

- Runs validations (naming, schema/row count).

- Loads to BigQuery staging/partitioned tables.

- Archives source files after success; alerts on failure (email/Slack).

- Reusable DAG params to support multiple vendors/paths.